Website "global" meta descriptions: Just say no!

Did I tell you about the time I had a well ranking website that suddenly disappeared from Google altogether? No? Oh, well let me explain the feeling you get when it happens: panicked confusion. You pretty much go through all of the stages of loss. Your top spot was like a relationship that you had worked on really hard, and suddenly she packs her bags and moves to another continent.

A little background on the website: it was a well put together site running on a CMS and had been pretty well SEO’d. Domain authority was good. It had a decent bit of link juice. It was the top result for locally targeted searches for quite a few relevant keywords and had been so for a long time.

Each page had unique content, unique titles, and unique meta descriptions. It was solid site. The catch is, the site DID have duplicate content. A lot of it.

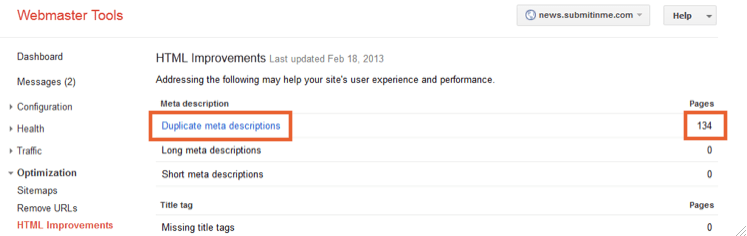

Google Webmaster Tools provides a pretty handy tool for identifying site html improvements. To be sure, there are better tools for in depth site analysis, such as RSSeo, but the GWT version will tell you what Google thinks about a few particular issues. Always good to know.

Google Webmaster Tools site analysis

Google Webmaster Tools site analysis

At first glance, all of the pages on our sitemap were A-ok. Upon second glance, the issue was obvious. We had a ton of pages with duplicate meta descriptions and page titles. The trouble came from a pretty basic photo gallery popup extension. The search crawlers interpreted the image popup links slightly differently than browsers do and created a URLs for pages that didn’t exist. Since this was a dynamic site, the CMS would take the url and try to display it. The “phantom” pages didn’t have any content, but they DID have meta descriptions and page titles. In fact, they all had the exact same descriptions and titles.

Stranger still, the descriptions and titles didn’t match any that were used on any of the normal site pages. After much cursing, I finally found that the global titles and description options were turned on. Evidently, somewhere in development, someone had entered a meta description that would be applied globally to all pages if they didn’t already have one. One tiny checkbox.

Wanna know what Google thinks of a site that has three times as many duplicate pages as it does it does actual pages? It’s the same way I feel about Justin Bieber. Vomit.

Wanna know what Google thinks of a site that has three times as many duplicate pages as it does it does actual pages? It’s the same way I feel about Justin Bieber. Vomit.

The site was identified as spam, and the algorithm deleted it from the Google index. In times past, this wasn’t something that would happen automatically. A physical person at Google would examine site data and issue a penalty if it was spam. When this happened, they would send you an email to your Webmaster Tools account informing you of the manual penalty.

When the algorithm itself calls you spam, there is no notification. It simply does its thing and gets on with life. This is unfortunate because we could have minimized the damage had we known about it right when the change happened.

Once the issue was identified, fixing it was not nearly as simple as you would think. It *should* just be a matter of turning off the global descriptions and fixing the broken image popup extension, but that would have been too easy.

Naturally though, those were our first steps. We submitted another sitemap to Google and let them do their thing. The crawl and index took forever. When your site is ranked VERY poorly, Google doesn’t really put any priority on crawling it. Eventually it did. We saw that it had and went to check on how things went. NOTHING had changed, despite the whole site having been crawled and indexed.

Even though the site itself had no links pointing to the faulty pages, the pages were still in Google’s cache. In fact, it would have been better to leave the phantom pages in place and just fix their meta data. Since the Googlebot didn’t see links for them, it didn’t crawl them. No crawl, no update. So the old versions were still considered valid.

Next step: manually delete the pages from the index. This process is not difficult, but there are some specific steps that Google makes you take to get it done.

First, the page must cease to exist. No redirects. It must return a 404 error. If you don’t want to go that route, then you have to block the url in Robots.txt or through other means. Then, you can put in a request through Webmaster Tools to delete the pages. They are deleted for 90 days. If the pages aren’t deleted and get crawled again after that, they will be reindexed.

We went double whammy on them. We deleted the pages AND blocked their old links using Robots.txt. Resubmit sitemap and wait.

Voila. The pages were finally gone. It took some time, but eventually the rankings started coming back. We still haven’t undone all of the damage, but it’s a LOT better than not being shown at all! Moral of the story? Do NOT turn on global meta descriptions or global page titles. Google’s Matt Cutts has said that it would be better to not have descriptions at all than to have duplicates.

Remember, as Gruff McGooglestuff says, "Just say no."